SEO Best Practices

Best practices and tips for learning Search Engine Optimization by yourself.

What is SEO?

SEO is an acronym that stands for Search Engine Optimization. As such, SEO is defined as any type of activity that enhances the search engine visibility of your business and digital assets (e.g. website, Google Maps listing, mobile app).

Search Engine Optimization encompasses the following two strategies: (1) on-page optimization, and (2) off-page optimization. Examples of on-page optimization include: content creation (i.e. writing articles, blogging), incorporating desired keywords within the web page, optimizing title tags and optimizing META tags. Examples of off-page optimization include: link-building, citation building and Google My Business optimization.

Unfortunately, it is not possible to guarantee search engine rankings because they are always changing. But if you take into consideration all of the criteria involved in SEO – and perform the tasks ethically – your rankings should be just around the corner.

White and Black Hat SEO

Search engine optimization is not an overnight job. It requires a well-orchestrated content and link building strategy, as well as the patience to monitor your progress over several months. Before jumping into the SEO arena, it is extremely important to distinguish between White Hat SEO and Black Hat SEO techniques.

White Hat SEO refers to an SEO strategy where no links are artificially created and that the website creator abides by all search engine rules. The biggest rule is that you target your website content towards humans first and search engines second. For example, don’t just write filler, thin or otherwise weak content stuffed with awkwardly positioned keywords. Think about what the human user will want to experience when they visit your website. As long as you obey the policies of the major search engines, you are a White Hat SEO’er. If you focus on human visitors, you’ll receive links from other websites which will fuel your strategy.

Black Hat SEO is just the opposite and is not at all recommended. This strategy focuses primarily on and around “poking holes” in search engine algorithms, or finding loopholes. The intent of Black Hat SEO is to manipulate the search engine ranks in order for your site to receive traffic. Buying links, keyword stuffing, invisible text and spamming blogs are just some of the popular Black Hat strategies. These techniques will not provide long-lasting results – and will most likely get your website blacklisted by search engines sooner than later.

An ethical approach to your Search Engine Optimization strategy is paramount to success. While results are not typically seen in at least a month, the time and patience you invest in your website will more than likely pay off. As long as you follow the White Hat SEO technique and provide value to Google searchers, your website will rank in the search engines.

Where Do I Start with SEO?

If you have a website published on the web, then you’ve already started! The very first step to achieving strong search engine rankings is to have your website indexed, or cached, by search engines like Google, Yahoo and Bing.

All major search engines like Google use robots, commonly referred to as “spiders”. These spiders continually crawl the web for new and updated web content to put in their index. When you first publish your website on the internet, you typically need to ask the search engines to visit and index your site. All search engines have a special page for website owners to manually submit their new website to their database. Alternatively, if you have another existing website, you can simply link to your new site. This will prompt search engine spiders to ‘follow’ the link and ultimately index your new site.

Doing Your Keyword Research

The next step to achieving ranks in Google involves researching potential keywords to focus on in your content. Knowing which keywords you want to rank for will give your content strategy plan a clear direction for the future. There are several tools online to help you do this research. The most popular tool is Google’s Adwords Keyword Research Tool (https://adwords.google.com/o/KeywordTool). With this tool you can enter in as many potential keywords you’d like to target, and view what kind of traffic volume they each receive. It also shows how competitive each keyword is in the marketplace. Using this tool, it is very easy to find keywords your website can rank for in the near future.

It is important to find plausible keywords to target. If you start your SEO campaign by targeting single or two-word keywords like “id theft” or “identity protection”, then your initial SEO effort will be difficult. A good initial keyword research strategy is to think of 3 or 4-word keywords that you can target and focus on in your site content. A more plausible keyword to target would be “Child Identity Theft Assistance” or “Child Identity Theft Protection”. These 4-word keyword phrases will have significantly less competition and thus easier to rank for. Most importantly, they will still generate relevant traffic to your website. As an example, a new website devoted to Identity Theft Protection will take longer to be ranked for “identity theft” than Child Identity Theft Protection. Once ranked for this and other long-tail words, the new site will be able to show up in the index for more complex shorter words, like Identity Theft.

Knowing Your Competition

As a general rule in business, knowing your competitor is an important part of success. With SEO, it is no different. Choose one of your new keywords you chose in your research. Go to Google.com and search for this keyword. The first 10 websites that are displayed are your new competitors. Get to know them.

By looking at the top 10 results for “Child Identity Theft Protection”, you can get an idea of how the competition stacks up to your new website. If the number 1 search result has a blog with very little content, and the last blog post was months or years – then you have found a window of opportunity to conquer your competition and gain site traffic. It would be wise to take advantage of this by targeting “Child Identity Theft Protection” in your content in the future.

Writing Website Content – How and Why?

Writing content for your website can sometimes be an intimidating task. Going back to the whole premise of White Hat SEO, you don’t want to write your content for search engines. But then again, your whole goal to creating a website is to rank well with search engines and acquire quality traffic – isn’t it?

The key to writing content on a website is to find a middle ground between human visitors and search engine visitors. A quality website article would be written in a way where any human can easily read and understand it, while at the same time including keywords into your copy in order to rank for it. Getting used to this process may take time; but by analyzing your competitors you can pick up on certain writing styles that do target both kinds of visitors.

Optimizing Your Content for Search Engines

To reiterate, it is a bad SEO strategy to write content for your website for the sole purpose of ranking in the search engines. At face value, this may seem like the most logical thing to do. Of course, Google and all the search engines are able to pick up on this tactic – and they do penalize for it.

There are better ways to go about optimizing your content for search engines. When you first sit down to write your content, consider there are two very effective ways to begin. For some creative people, it is easy to start with a keyword or two in mind to target. The very first thing they to do is write a title for the article with the keyword in it. If you target the keyword “Child Identity Theft Protection”, then a good article title would be “Top 10 Tips for Child Identity Theft Protection”. By diluting the keyword within the title, you avoid being flagged by Google as a website simply trying to manipulate rankings.

It is not always that easy to start with the keyword in mind and write an effective title and article. I find it much easier to start simply with the ‘concept’ of what the article is going to be about. Outline it as you would normally, without thinking about keywords or SEO. Then simply write an effective article. Once you are done, and happy with the content of the post, re-read it and add your keywords where they make sense and seem natural. Once they are appropriately placed in the copy, you can write the title for the post. Remember to include the keyword in the title.

One key concept to note about adding or using keywords in the copy is to be careful to use your keywords effectively and naturally for the reader. Do not blatantly integrate your keywords inside your article. Also, do not over emphasize the keyword in the article. Here is an example of how not to write:

“Child identity theft protection is very important. Without a solid Child identity theft protection service, you are in danger.”

This kind of writing style is not recommended. It does not appeal to human readers because its choppy and repetitive. And it is not appealing to search engines either because you are “stuffing” keywords wherever you can, as many times as you can. Search engines are highly intelligent, and can very easily pick up on this poor writing style. You will soon face penalties if you pursue this kind of content.

A more ideal way of writing this would be something like:

“Securing your identity online is becoming a difficult task as technology advances. Nobody is safe, not even your children. Thankfully, new child identity theft protection services have begun to show in the marketplace.”

Notice how the keyword is not so bold and overemphasize, and seems to naturally appear in the content. This is how content should be written.

SEO Meta Tags

First, watch the video below to get a perspective on how meta tags influence your rankings: https://youtu.be/RBTBEfd7z_Y

- The rel=”canonical” meta tag tells the search engine what the authoritative page is, in the event you have similar or duplicate content your website. Google respects this meta tag so it’s critical that you study it if you haven’t already.

- The rel=”nofollow” meta tag tells search engines not to follow a link from your website. The reason this is important to use is because whenever you link to a separate website from your own, you give up “link juice”, which is something admits to using in their ranking algorithm. If you can, try to apply rel=”nofollow” to all websites you link out to. Or at the least, the websites you don’t trust (which you shouldn’t be linking to anyway). More on nofollow from Matt Cutts.

- Title tags are still critical. Nothing new here.

- Meta descriptions are still important. Nothing new here either.

Once you have established your first article with keywords in it, you want to further optimize your article through various methods. One of these methods is the use of Meta tags. Meta tags are embedded in the actual HTML code of your web page used to provide structure metadata about a web page. This code is placed in the <head> section of your web page. Meta tags server many purposes. In regards to SEO, Meta tags are used to communicate with search engines what the page is about. There are two widely used Meta tags – keywords and description. These are relatively self-explanatory. In the Meta keyword tag, you want to include the keywords you are targeting for that specific page. And for the Meta description tag, you want to provide the description of the page.Meta tags are a great way of communicating directly with search engines. Of course there are rules that come with this. Generally, you do not want to exceed 100 characters or 45 words in the keyword tag – whichever is first. For the description tag, you do not want to exceed 150 characters. A common SEO mistake is too “stuff” keywords in this Meta tag, hoping to rank for several different keywords. It simply does not work like that. If you do this you run a high risk of being marked as a spam website. Keep it short and sweet, and try to use the single keyword also used in the title.

Meta Keywords Example: Child identity theft protection, protect against child identity theft, child id theft (84 characters, 12 words)

Meta Description Example: Invest in Child Identity Theft Protection Services and Save Your Children’s Future (86 characters, 12 words)

Also, keep in mind that every page on your website is unique and, therefore, should have a unique title, title tag and meta data. Do not use the same title for multiple pages, and vary the other meta data.

Off-Page Optimization – Building Links and Link Juice

The three major search engines are Google, Yahoo and Bing. These three search engines are around for a reason: they stay true to their philosophy of a democratic-controlled search engine platform. Search engines rank websites according to how many votes they receive. Simply put, a vote is an inbound link to your website. For example, if Wikipedia.org links to your site, you have one vote. In other words, search engines rank websites according to how many votes they received. But it doesn’t stop there just yet.

The American democratic voting system can be used as a simple metaphor to explain how search engines rank websites. In America, one vote from a wealthy businessman is just as potent as a vote from a Starbucks employee. All votes are equal. The way Google and other major search engines work is similar, but with one major difference. One vote from Wikipedia.org does not equal a vote from Eggleston.com. Why? Wikipedia.org has more authority, also known as “link juice”.

Link juice is an informal term used by many Search Engine Optimizers which refers to the amount of “link currency” a website possesses. As Google roams around the World Wide Web, it looks at how websites link to each other in order to figure out which ones are best. Let’s say that Site A and Site B are both about “Child Identity Theft Protection” and would both like to rank for this keyword. Google takes a look at the links to determine which of these two sites is best. At a very basic level, assume that Site A receives an inbound link that Site B doesn’t. Naturally, Site A will look better to Google and will ultimately outrank Site B. But let’s say that Site A and B both receive an inbound link from Site C. In this scenario, both sites look the same to Google – so in order to determine which site will rank better, Google looks for other ranking criteria.

When a website links to or “votes” for your website, they are leaking link juice to your website for the anchor text keyword. Anchor text is the title of the link to your website. This creates a click-able hyperlink to your website from theirs, hopefully with keywords inside of it. The words contained in the anchor text can determine the ranking the page will receive by the search engines. When a high-quality website links to yours with anchor text of “Identity Theft Protection”, you immediately gain favor of the search engine ranking results for that specific keyword. (eg. a link from a blog post on MSN money to an article, page or post on the website, where the link was the actual keywords “Child identity theft protection” is a great source of link juice for the keyword anchor text to the website.

Since not all links are created equal, a link from a website with low link juice will not be as effective as a link from a site with a medium amount of link juice. If Site A receives links from multiple sites, it should theoretically rank higher than Site B. But if Site B has a single website link to them with a high amount of link juice, it will outrank Site A.

Your overall goal when considering a link-building campaign is to make it appear as natural as possible. Do not go and have multiple high-authority websites link to you overnight. That is not a natural linking pattern, and Google will be able to notice it. Take a month or two and spread out your link-building campaign so your search rankings stick. In general, if you build links slowly, you will see results that last. If you go too fast building links to your website, you may not rank for those words for very long. Take your time building your inbound links.

Further Content Optimization and Keyword Density

When multiple competing websites begin ranking for the same keyword, Google first looks at the inbound links each website has. Google sorts their indexed rankings with this in mind. But when websites come close to matching each other’s link juice, Google needs to consider other criteria. One criterion search engines use is keyword density.

Keyword density is defined as the percentage of times a keyword or phrase appears on a web page compared to the total number of words on the page. If a 200-word paragraph about credit scores uses the keyword “credit score” two times, its keyword density is 1%. Search engines use keyword density formulas to determine whether a web page is relevant to a specified keyword or keyword phrase.

In the 1990’s, search engines used keyword density as one of its major determining factors when ranking websites. As search engines evolved over the next twenty years, the keyword density factor became less important – but still very relevant.

Google became aware that webmasters were abusing this knowledge and ultimately devalued the importance of keyword density. Website content writers were “stuffing” keywords in any place they could find space. They would cloak keywords inside their website by changing the color of the text to that of their background. These kind of mischievous actions are considered unethical in the SEO world because you are making it clear to the rest of the world that you only care about achieving high search rankings. More importantly, you are essentially communicating to your potential customers that you don’t care about them. Simply put, it is bad practice.

So now that you know what not to do, what is the right amount of keyword density? Many SEO experts on the web today have collaborated together and have come to the conclusion that an optimal keyword density is between 1 to 3 percent. This rule of thumb should help ground you and your content writing style. Of course you want to include your keyword in your page copy – but do it organically through the process of your writing. Find a solid middle ground that sounds good to you and people in your industry, and you should be OK. And again, it is always prudent to investigate your competitors and potentially emulate their successful writing style.

Optimizing Your Images for Search Engines

A good SEO tip when writing content for your site is to include images to help attract your visitor’s interest. Visual aids in general are a good way to grab and keep people’s attention span, and with website content it is no different.

Google Images is a variation of Google Search, only they return image URLs instead of web page URLs. In terms of Search Engine Optimization, Google Images can provide a huge amount of relevant traffic to your website if you follow five basic rules:

- Find the right images. Finding relevant images that relate to your web copy is of utmost importance – both for humans and search engines. While research shows that text is the first thing view on the page, it is the image that ultimately sells the page.

- Use the keyword in the image’s file name when possible. This is very similar to placing keywords in the URL of your pages. The same is true for your images. By naming the file name after your keyword, you are helping the search engines determine the relevancy of your image. If you had a post targeting the keyword “Identity Theft Protection”, then it would be wise to title the image “identity-theft-protection.jpg” or something very similar.

- Create descriptive ALT text. ATL stands for “alternate”, as in alternative text. It is the text typically seen when you mouse-over an image. Originally, it was created and is currently used for vision-impaired web visitors. The ALT text can be ‘read’ so that the image can be shared with the blind, just like the content of the page is. Think about the search engines needing this same kind of additional help, as they can ‘read’ but they cannot ‘see’ your selected image.

- Be sure that your image matches your content. The content surrounding your image should be closely related to what your post is about. The image should be related to your anchor tags, image URL and ALT tags. When these criteria are successfully employed, you help search engines confirm that you are not spamming about that your image is of high-quality and relevant to your keyword.

- Don’t stuff keywords! This goes for all kinds of SEO, but it needs to be reiterated over and over again because even intelligent people make mistakes. But making this kind of mistake is very serious, and can land you in a heap of trouble from the search engines.

It is always a good idea to take a step back from your computer and ask yourself: “Does this appear natural to me as a visitor?” Remember to tailor your SEO practices around human visitors. If you focus too much on what the search engines like to see, you will probably end up making a mistake. Avoid these mistakes by staying true to your White Hat SEO strategy. If you build it right, they will come.

SEO-Friendly URLs

URLs on the World Wide Web come in all shapes and sizes. One of the most common SEO mistakes made today is the lack of an SEO-friendly URL structure. Just like renaming your image URLs to include your keyword is important, so is the keywords in the URL of your web page.

There is a rule of thumb in the SEO industry to keep all URLs “short and sweet”. What this means is that your URL structure should be able to be memorized by a human visitor. Having URLs with excessive or irrelevant characters and words can be a serious detriment to the success of your website in the search engines.

For example, naming a page URL “www.kyleeggleston.com.com/29439-take-caution-with-your-childrens-id” is a poor choice for a URL – not just for search engines, but also for visitors. Instead, keep the keyword concise in the URL and avoid any unnecessary words or characters. “www.kyleeggleston.com/child-identity-protection” is a much clearer, concise URL that can be understood by humans and search engines. Moreover, it also includes keywords in the URL which further describes your page to visitors.

If your website is beginning to grow in content, it may be a good idea to begin categorizing your URLs. If you have an overwhelming amount of content, Google needs to sort it out first. You can help search engines do this by creating a URL structure that categorizes your content. “www.kyleeggleston.com/children/identity-protection” is an example of an SEO-friendly URL structure. You may also notice the use of dashes in the URL. Search engines favor dashes over underscores. The reason for this is because underscores are considered ‘alpha characters’ and do not separate words. Dashes are word separators, but should still not be used excessively at the risk of looking spammy.

The more effort you put into helping visitors understand what your content is about, the more likely you will be able to succeed in the ranking results.

Monitoring and Tracking Your Success with Google Analytics

Let’s assume you have had your website up for about 3 months. You have been posting regularly about twice a week, with keyword density of around 3% and unique titles and meta data. You are off to great start, and soon you will start reaping the rewards. Once you begin receiving traffic from search engines, it is good practice to get used to analyzing your traffic data using Google Analytics and Site Catalyst from Omniture.

Once your website begins ranking well in search engines, hopefully you will begin to see an influx in traffic. Google Analytics and Site Catalyst allow you to track your traffic by keyword. If you run these reports on a regular basis, you can get an excellent snapshot of how your website is doing in the search engines. By monitoring your keyword traffic, you can determine which keywords need more reinforcement in your future content. Knowing your traffic is important when considering your next step.

Further Optimization via Sitemaps

One of the final tasks you should consider when creating or adding content to your website is the use of a sitemap. A sitemap is a list of pages of a web site that are accessible to search engine spiders or visitors. Typically, sitemaps are organized in a hierarchy. There are two popular ways to create a sitemap. XML sitemaps are structured for search engines, and indicate to search engines the relative importance of your pages to each other. HTML sitemaps are tailored more for human visitors looking to easily navigate through your website. It is usually a good idea to have both XML and HTML sitemaps for your site. Google prefers XML sitemaps, and prefers the sitemap to be added to Google Webmaster Tools, were Google shares information about the health of the optimization of your website.

Search Engine Optimization and the Future

Search engines are always changing, learning and evolving. Employees at Google, Yahoo and Bing are constantly trying to better their search engine results by analyzing what makes a website relevant to a particular keyword. Although search engines modify their algorithms just about every day, they have not – and will not deviate too far from their original criteria for ranking websites. Quality content and link building will always reign as King of the Internet. And while Search Engine Optimization can seem unnecessarily complex at times, it truly will be the simple things you follow that will get your rankings.

Be patient with your site and be honest with your visitors. If you follow these White Hat SEO principles and do not experiment with the “dark side”, you have nothing to worry about. Stay true to your link building and content strategy and watch your site climb the ranks.

Mobile and Local SEO: How Google Evolved and Adapted to a New World

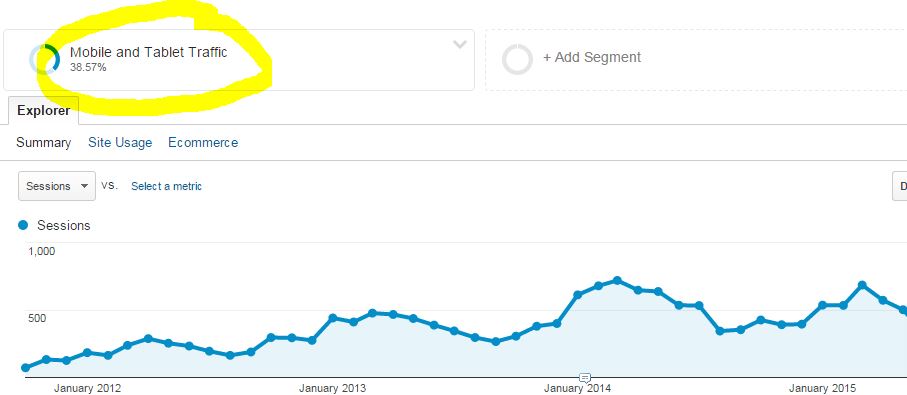

In 2015 and 2016, Google made several algorithm changes, including the notorious “Mobilegeddon” update, which improved the rankings for mobile-friendly websites. This should surprise no one, since 91% of adults in the United States use a smartphone and or tablet device every day. Since Google’s mission is to provide the world with information, they were forced to adopt a new search experience for its users. Google’s goal is stay ahead of technology trends like these. Therefore, you can expect Google to always be making changes to its search engine ranking algorithm and its user experience. Notice how you keep seeing more and more local business listings in your search results? That is no coincidence!

Google’s motivations are not entirely altruistic, however. Google is a subsidiary of Alphabet, a publicly traded company who has shareholders that expect profit above all else. So while Google’s search engine changes its algorithm and the way it displays its information, remember that their main objective is to sell advertising space, which accounts for about 90% of its total revenue. As Google evolves, expect to see more of the following:

1. More ads in Google local business search and map results

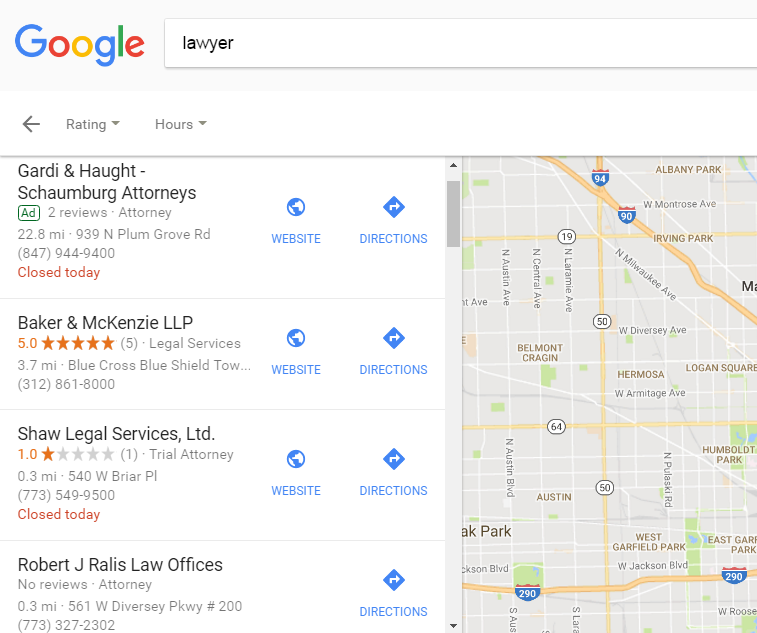

In 2016 Google began showing advertisements in the local business results in nearly every vertical. The new local ad space, listed at the #1 position, effectively lowers the organic search results by one ranking position. Local businesses who achieved #1 ranking positions in the local map pack are no longer truly #1.

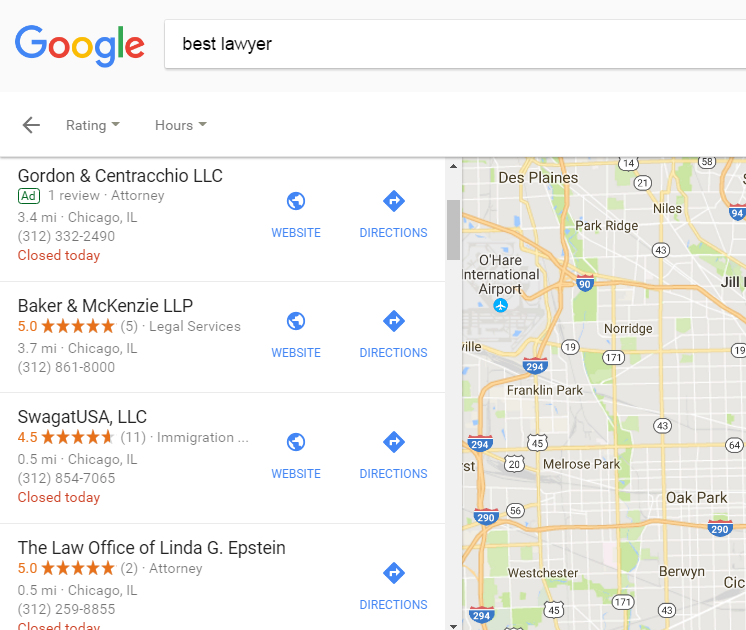

2. 5-star reviews are more important than ever in Google Map’s local search results, especially for keywords using the word “best”

In 2017, Google began automatically filtering out local businesses with review scores under 4.0 stars in their Google business listing. It’s important to ask your clients to leave positive reviews as soon as you can and as often as you can. The longer time passes, the less likely your customer will leave a review. Be sure to capture your client’s emotional satisfaction after visiting your business. Your Google reviews are becoming more and more important to growing your local Google Maps rankings.

“lawyer” local business results with low-quality reviews

“best lawyer” Google local business results with low-quality reviews

3. Consider a local advertising campaign with Google Ads to supplement your organic rankings

The local search engine landscape has become more competitive with the continued adoption of smartphone and mobile devices. Google is starting to show more ads, not only because their stockholders want to keep the cash flowing, but because people like you and me engage with them. Google displays highly targeted, highly relevant and most importantly, Geo-oriented advertisements that help us find what we are searching for more easily. More than 50% of all consumers “Google” their local business before visiting. Google is now tailoring their advertisements to that channel. It’s important to consider a local Google Ads campaign, targeting areas your business serves. Being first in Google in the organic results no longer means you are #1. It means you are #2 or perhaps #3. Be sure to reconsider the value of an effective paid local search strategy in addition to your organic strategy.

Dramatic increase in mobile traffic

Google is making great strides in local and that’s why I’m starting the best practices guide with Local. The Web is increasingly local due to the exponential growth of mobile devices due to their affordability across the world. Google understands this trend (they own Android for God’s sake) and are actively pursuing a more intuitive local search experience. As mobile phone usage increases over the next 10 years, so will attention towards the local search experience. Local search marketing is an extremely important marketing channel to focus on when planning your SEO strategy, whether you are a service-based or brick-and-mortar business.

The key to successful local SEO rankings include, but are not limited to:

- Make your website mobile-friendly and be sure to test it using Google’s mobile usability tool.

- Verify your business on business.google.com

- Populate your Google listing with a vivid description, add pictures, add accurate categories (don’t spam) and link back to your website homepage or contact page.

- Build citations on high-authority websites. Where you get your citations will differ depending on your business type. Thankfully, Moz put together a top citations source list sorted by business category as a free tool. I don’t recommend automated citation building services, including Yext, Moz, Bright Local, etc. I’ve used them all, and can attest that you can save money by creating your citations manually.

- Use JSON-LD schema markup to mark-up your location data. Read Google’s instructions on how to properly install JSON-LD schema on your website. It’s relatively easy and takes about 10 minutes.

- Find and remove duplicate listings on websites like YP.com, Facebook, Foursquare, Google, etc. Duplicate listings are a known negative ranking factor with Google’s local ranking algorithm.

- Use Google Search Console to track website IMPRESSIONS. Remember, most people searching for a local business, especially from their mobile phone, will not actually click-thru to your website. Impressions are the better key performance indicator when measuring local SEO success.